Gemma 4 Guides

Gemma 4 GGUF Download Guide: Safe Sources, Quant Tips, and Local Setup

If you need a Gemma 4 GGUF download, you are already past the “what is this model?” stage. You want the safest way to download Gemma 4, pick the right quant, and get a local run working without wasting hours.

This guide is built for that exact intent. It covers trusted sources, when to use Gemma 4 Hugging Face repos, how to run GGUF builds in llama.cpp, and when Ollama is the simpler route.

Gemma 4 GGUF download: start with the right file, not the biggest file

The biggest mistake in any GGUF workflow is assuming the largest file is automatically the best file.

Before you download Gemma 4, decide which model family member actually fits your machine:

- E2B if you need the lightest entry point

- E4B if you want the best balanced first local run

- 26B A4B if you want stronger output and care about efficiency

- 31B if you want the highest-end dense model

Then choose the quant based on memory. The GGUF path is only useful if the file you pick can run comfortably on your hardware.

If you still need help choosing the model, read What is Gemma 4? first.

What is Gemma 4 GGUF?

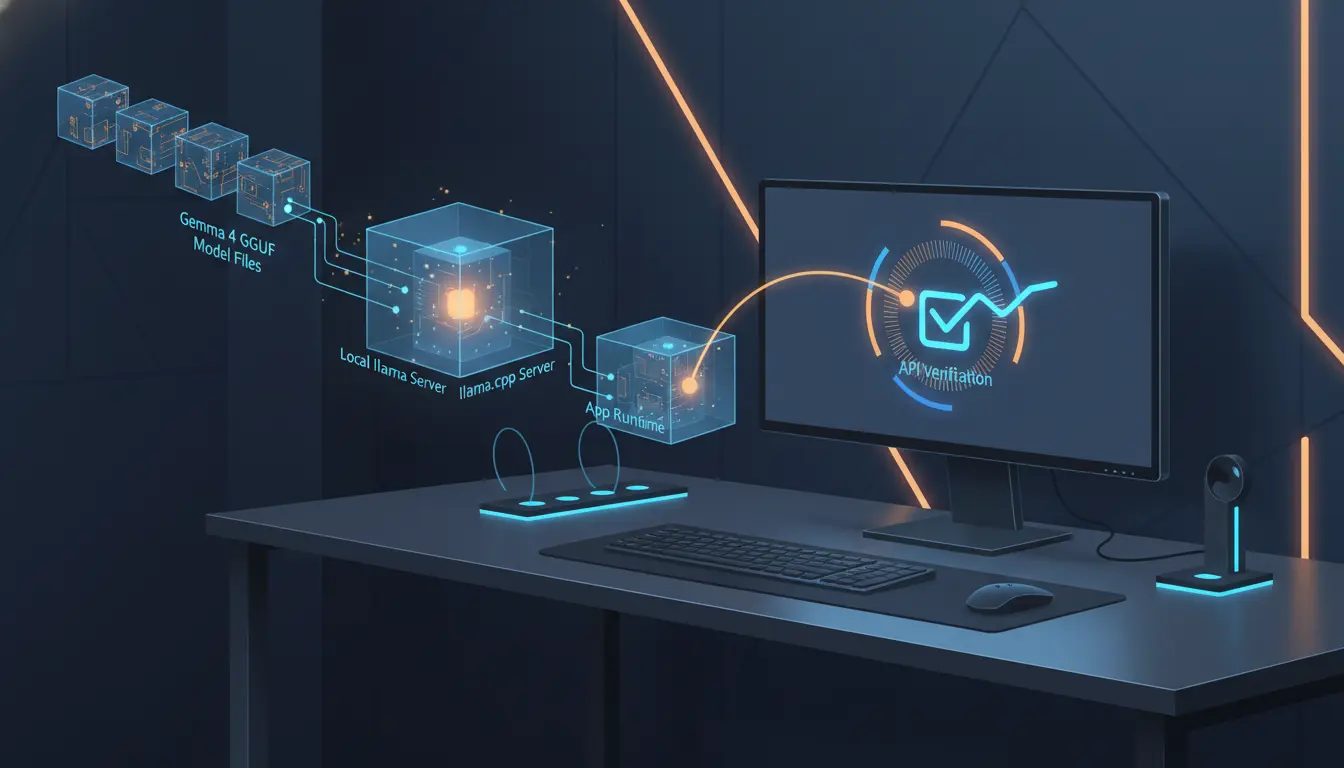

A Gemma 4 GGUF setup gives you a llama.cpp-friendly binary model format designed for efficient local inference and metadata-aware packaging.

In practice, Gemma 4 GGUF matters because it is the format most local users want when they need:

- llama.cpp compatibility

- quantized files for smaller memory budgets

- a direct path to local CLI or local server deployment

For many local builders, Gemma 4 GGUF is the most practical bridge between model availability and a usable self-hosted workflow.

If your goal is local control, the GGUF route is often the fastest path.

Trusted sources for Gemma 4 GGUF download

Not every GGUF source carries the same trust level.

Here is the practical source hierarchy:

| Source | What you get | Best use |

|---|---|---|

| ggml-org on Hugging Face | Official-style GGUF repos aligned with llama.cpp usage | Best first stop for llama.cpp users |

| Ollama library | Managed local install of Gemma 4 builds | Best first stop for simplicity |

| bartowski on Hugging Face | Many quantized community GGUF files | Best when you need more quant choices |

| Official Google / Kaggle weights | Safetensors and first-party distribution context | Best for provenance checks, not direct GGUF use |

If your main concern is provenance, start your research with official Google Gemma 4 channels and then move to the trusted llama.cpp ecosystem. If your main concern is convenience, Ollama is often the better answer than a manual Gemma 4 download.

Gemma 4 Hugging Face options

For many readers, the most practical GGUF path starts on Gemma 4 Hugging Face repositories.

You usually have two Hugging Face paths:

- Use ggml-org repos for a more direct llama.cpp-friendly GGUF route

- Use community quant repos such as bartowski when you need a pinned file like

Q4_K_M

That is why Gemma 4 Hugging Face is such an important phrase in local setup. It is not just where you browse. It is where you decide whether you want first-party-style alignment or broader community quant options.

Fastest Gemma 4 GGUF path with llama.cpp

The quickest route in llama.cpp is the direct -hf mode.

Install llama.cpp first:

brew install llama.cpp

Or on Windows:

winget install llama.cpp

Then verify:

llama-cli --version

llama-server --version

Now run the simplest server-style GGUF flow:

llama-server -hf ggml-org/gemma-4-26B-A4B-it-GGUF

That command lets llama.cpp handle the Gemma 4 download and local cache automatically. If you want an OpenAI-compatible local endpoint, this is the smoothest route.

Manual Gemma 4 download with Hugging Face CLI

Some users want a pinned, explicit file instead of an automatic pull.

That is where manual Gemma 4 download commands help:

pip install -U "huggingface_hub[cli]"

huggingface-cli download bartowski/google_gemma-4-31B-it-GGUF \

--include "google_gemma-4-31B-it-Q4_K_M.gguf" \

--local-dir ./

Then run it locally:

llama-cli -m ./google_gemma-4-31B-it-Q4_K_M.gguf -p "In one sentence, what is Gemma 4?"

This style of setup is better when you care about exact file names, exact quant choices, or repeatable deployment scripts.

Easiest way to download Gemma 4 if you do not want manual files

A full manual GGUF workflow is not always the right choice for beginners.

If you mainly want a simple local run, use Ollama:

ollama --version

ollama pull gemma4

ollama list

ollama run gemma4 "Give me a one-line test."

You can also pull size-specific tags such as gemma4:e2b, gemma4:e4b, gemma4:26b, and gemma4:31b when those tags fit your plan.

For many people, this is a better first Gemma 4 download path than a manual GGUF workflow, because Ollama manages the local model lifecycle for you.

How to verify your Gemma 4 GGUF setup worked

Your GGUF setup is only complete when you verify the runtime.

For llama.cpp server mode:

curl http://localhost:8080/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer no-key" \

-d '{

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "In one sentence: what is Gemma 4?"}

]

}'

For Ollama:

curl http://localhost:11434/api/generate -d '{

"model": "gemma4",

"prompt": "Give a one-line test output."

}'

If your setup passed both file loading and a real prompt response, you are in a good place to move on to actual task testing.

Common Gemma 4 GGUF problems

A smart guide should warn you about the first-week problems people actually hit.

Problem 1: the model loads poorly or fails in a GUI

Some GUI tools lag behind llama.cpp support after a new model release. If your GGUF build fails in one app, test the same model in the latest llama.cpp before you assume the file is broken.

Problem 2: out-of-memory errors

If a GGUF build loads but crashes or runs badly, the fix is usually one of these:

- choose a smaller quant

- choose a smaller model

- lower context usage

- stop assuming 31B is the correct first test

Problem 3: modality mismatch

The model family may support audio on paper, but local tools can lag behind that support. A GGUF file does not guarantee every runtime exposes every modality on day one.

Where to go after your Gemma 4 GGUF setup

Once your GGUF setup is complete, these guides are the best next steps:

- What is Gemma 4?

- Gemma 4 review

- How to run Gemma 4 with llama.cpp

- How to run Gemma 4 in Ollama

- Gemma 4 hardware requirements

Final Gemma 4 GGUF download recommendation

The final recommendation from this guide is simple: if you want control, use ggml-org or a trusted Gemma 4 Hugging Face GGUF source with llama.cpp; if you want convenience, use Ollama; and if you want the highest chance of success, start smaller than you think.

A successful Gemma 4 GGUF download is not about grabbing the first file you see. It is about choosing the right source, the right quant, and the right runtime from the start.

Related guides

Continue through the Gemma 4 cluster with the next guide that matches your current decision.

Does llama.cpp Support Gemma 4? GGUF Status, Fixes, and What Works

A practical answer to whether llama.cpp supports Gemma 4, with the official GGUF links, current support status, and what 'supported' really means.

How to Run Gemma 4 with llama.cpp: GGUF Setup, Hardware & Quantization Guide

Everything you need to get Gemma 4 running locally with llama.cpp: hardware tables, copy-paste build commands, quantization guide, and multimodal setup.

Gemma 4 API Guide: Local OpenAI-Compatible Setup

Use this Gemma 4 API guide to build a local OpenAI-compatible endpoint, test it quickly, and choose the right runtime for your workflow.

Still deciding what to read next?

Go back to the guide hub to browse model comparisons, setup walkthroughs, and hardware planning pages.