Gemma 4 Guides

What Is Gemma 4 AI? Google Gemma 4 Release, Models, and How to Start

If you are asking what is Gemma 4, the short answer is that Gemma 4 AI is Google DeepMind's newest open-weight multimodal model family for local, edge, and server use.

That simple definition is useful, but it is not enough. A better way to define it is that Google Gemma 4 is a family of four models released on April 2, 2026, designed to cover everything from lightweight local experiments to serious workstation and production workloads.

What is Gemma 4?

The clearest answer to what is Gemma 4 is this: Google Gemma 4 is an Apache 2.0 licensed model family that includes E2B, E4B, 26B A4B, and 31B.

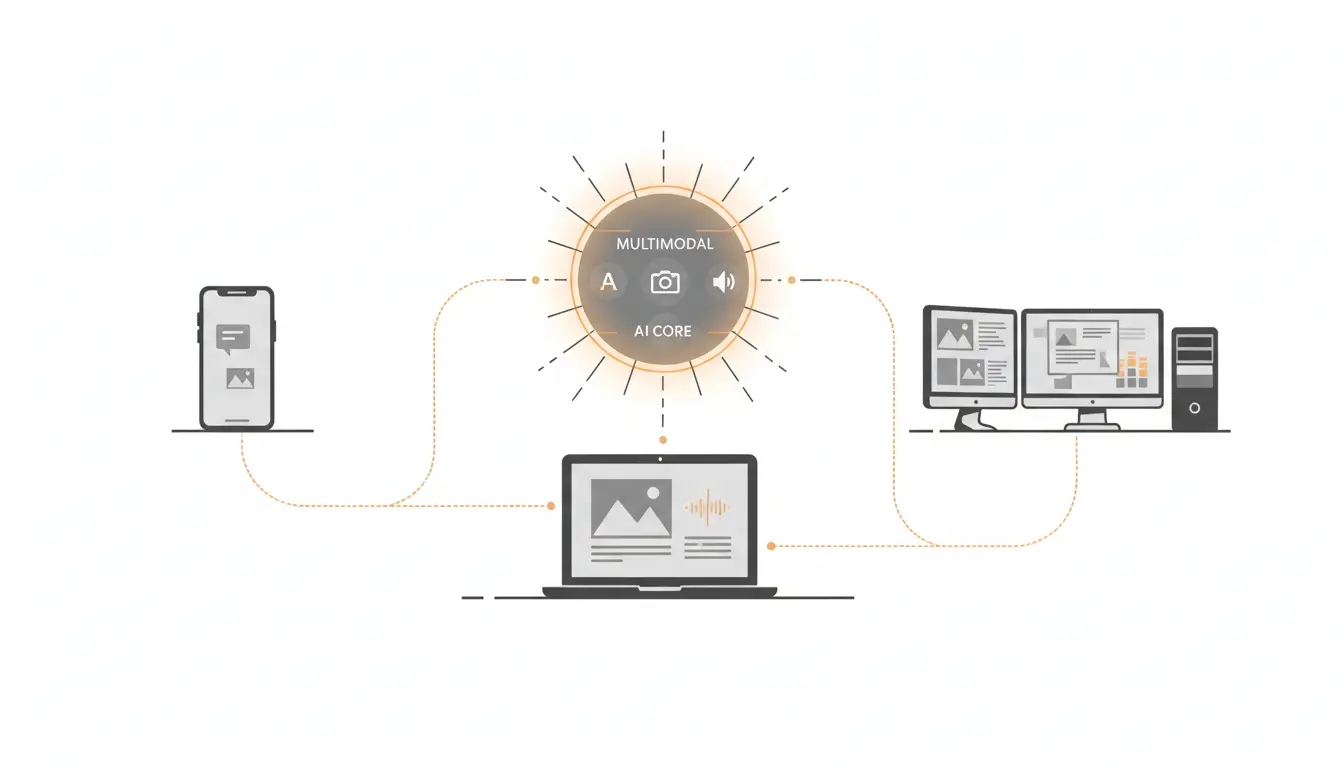

In practical terms, Gemma 4 AI gives you:

- text and image input across the full family

- audio input on the smaller edge models

- 128K context on E2B and E4B

- 256K context on 26B A4B and 31B

- a cleaner licensing story than earlier Gemma releases

So when someone asks about Gemma 4, the important part is not only that it is a new model. It is that Google Gemma 4 is structured as a full lineup with clear roles.

Why the Google Gemma 4 release matters

The Gemma 4 release matters for two reasons.

First, Google Gemma 4 arrives with strong official benchmark positioning in reasoning, coding, science, and multimodal tasks. Second, the Gemma 4 release moved the family to Apache 2.0, which makes Gemma 4 AI much easier to evaluate for commercial and internal use.

That is why this is becoming such a high-intent question. People are not only curious about the model. They are deciding whether Google Gemma 4 is worth building around.

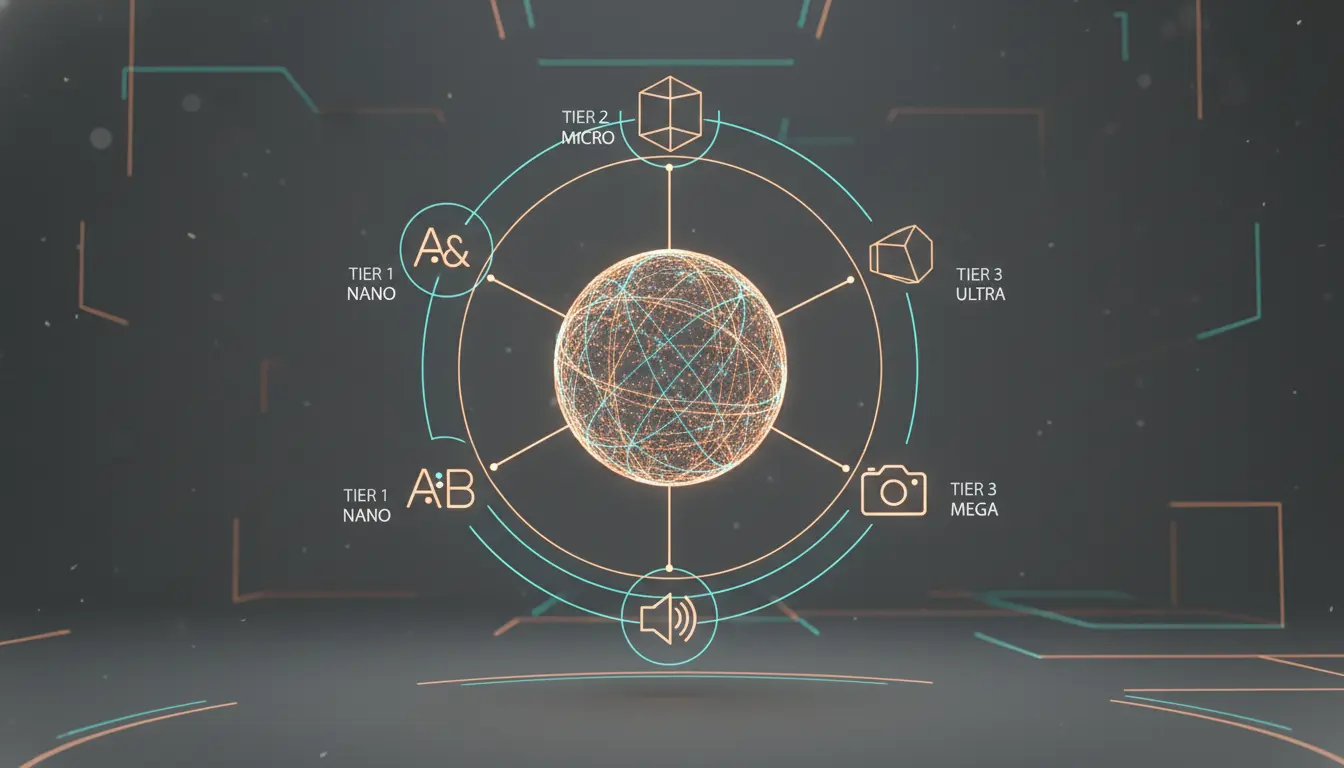

Google Gemma 4 model lineup

To answer that properly, you need to understand the four models.

| Model | Architecture | Context window | Modalities | Approx. Q4 memory |

|---|---|---|---|---|

| Gemma 4 E2B | Edge-oriented dense | 128K | Text, image, audio | 3.2 GB |

| Gemma 4 E4B | Edge-oriented dense | 128K | Text, image, audio | 5.0 GB |

| Gemma 4 26B A4B | MoE | 256K | Text, image | 15.6 GB |

| Gemma 4 31B | Dense | 256K | Text, image | 17.4 GB |

This table is why Gemma 4 AI is easier to plan around than many launches. Google Gemma 4 does not force you into one giant model if your machine or budget says otherwise.

Which Gemma 4 model should you start with?

A practical way to answer it is to ask which version fits your machine.

- Start with E2B if you want the lowest memory barrier.

- Start with E4B if you want the best balanced local trial.

- Look at 26B A4B if you want stronger output with a more efficient high-end design.

- Choose 31B if you want the best quality in the family and you have the hardware to support it.

For most readers asking this question, E4B is the easiest recommendation. It is large enough to feel serious and small enough to make local testing realistic.

What does “effective parameters” mean in Gemma 4 AI?

This part confuses many first-time readers of the Gemma 4 release.

The smaller models are described as “effective parameter” edge models, while 26B A4B is a MoE model with lower active parameters during inference. That does not mean memory planning disappears. Gemma 4 AI still needs real weight memory, and the larger MoE model still requires a substantial footprint even though not all parameters are active on every token.

That is why the most useful beginner answer includes memory planning, not just model names.

Are the Gemma 4 benchmarks actually good?

For beginners, the right starting answer is not a giant spreadsheet. It is a simple interpretation of the published results.

The 31B and 26B A4B models post strong official numbers on AIME 2026, LiveCodeBench v6, MMMU Pro, and GPQA Diamond. They also show competitive performance on Arena-style preference snapshots. In plain language, Gemma 4 AI is not interesting only because it is open. It is interesting because the top models are genuinely competitive.

That competitive positioning is a big reason Gemma 4 AI has become more than just a curiosity release.

For teams evaluating open models in 2026, Google Gemma 4 stands out because Gemma 4 AI is unusually easy to map to real hardware and real deployment choices.

If you want the detailed score breakdown, read the full Gemma 4 review.

Why Apache 2.0 matters

The licensing shift is a major part of the story.

Google Gemma 4 uses Apache 2.0, which is a standard permissive software license. For many teams, that means fewer custom restrictions, less legal uncertainty, and a more straightforward path to product evaluation. If you compare the Gemma 4 release to earlier Gemma generations, this is one of the biggest operational improvements.

So if you ask the question from a business angle, the answer is not just “a new Google model.” It is “a new Google model that is much easier to adopt.”

How do you start with Gemma 4 AI?

The best beginner path depends on what kind of start you want.

If you want the easiest local route, use Ollama:

If you want more control and GGUF support, use llama.cpp:

If you are still comparing models and deployment paths, keep these open:

Final answer: what is Gemma 4?

The final answer to what is Gemma 4 is that Gemma 4 AI is Google DeepMind's Apache 2.0 open-weight model family for people who want modern multimodal capability without locking themselves into one deployment path.

If you want the shortest takeaway, the real question is: “Is there now a Google Gemma 4 lineup that is strong enough, clear enough, and open enough to build on?” The answer is yes, and the easiest place to continue is the Gemma 4 review or the Gemma 4 GGUF download guide.

Related guides

Continue through the Gemma 4 cluster with the next guide that matches your current decision.

Does llama.cpp Support Gemma 4? GGUF Status, Fixes, and What Works

A practical answer to whether llama.cpp supports Gemma 4, with the official GGUF links, current support status, and what 'supported' really means.

Does LM Studio Support Gemma 4? Compatibility, Model List, and Requirements

A clear answer to whether LM Studio supports Gemma 4, with the supported model list, minimum memory, and practical setup expectations.

Does Unsloth Support Gemma 4? Local Run and Fine-Tuning Status

A practical answer to whether Unsloth supports Gemma 4, covering local run support, fine-tuning support, and the model-specific caveats that matter.

Still deciding what to read next?

Go back to the guide hub to browse model comparisons, setup walkthroughs, and hardware planning pages.